Blockchain Technology for Big-data Sharing in Material Genome Engineering

Material Genome Engineering (MGE) has revolutionized materials science by enabling data-driven discovery, design, and deployment of advanced materials5,63,64. The cornerstone of this transformative approach lies in the effective sharing of large-scale, heterogeneous datasets encompassing computational models, experimental measurements, and theoretical predictions. Establishing a comprehensive data-sharing framework not only accelerates scientific progress but also fosters cross-disciplinary collaboration, ensuring data reproducibility and driving technological innovation21. However, the inherently complex and sensitive nature of materials data requires a well-defined lifecycle management system. This system must address critical aspects, such as data integration, trading and circulation, data-driven computation, governance, and security and privacy. Each stage plays a pivotal role in enabling transparent, scalable, and secure data sharing, ultimately supporting the broader goals of MGE in advancing materials research and industrial applications1.

Blockchain-Driven Opportunities in Materials Data Sharing

The rapid advancement of materials science has driven the development of various data platforms within the Material Genome Engineering (MGE) initiative1, such as the Materials Project12, AFLOW13,14, the Open Quantum Materials Database (OQMD)15, and the National Material Data Management and Services (NMDMS)4,5. These platforms play a pivotal role by aggregating vast repositories of computational and experimental materials data. Through user-friendly interfaces, advanced query systems, and integrated tools for data analysis and visualization, they have become indispensable to researchers aiming to accelerate materials discovery and design.

However, despite their success, these platforms exhibit critical limitations rooted in their centralized architectures. Centralization often results in scalability bottlenecks, where the ever-growing influx of materials data strains storage and processing capacities. Furthermore, centralized frameworks are more vulnerable to data breaches due to single points of failure, raising concerns about data security and system reliability. Ensuring data provenance also poses a significant challenge, as tracking the entire lifecycle of data from generation to usage becomes complex in such architectures.

At the same time, challenges in multi-modal data storage and retrieval further exacerbate the limitations of current materials data platforms. Materials science research involves a wide range of data types, including structural information, experimental parameters, computational simulation results, images, and natural language descriptions. These heterogeneous datasets are often stored in disparate formats and databases, lacking unified standards for integration. Existing retrieval tools are typically designed for single-modal data, making it difficult to perform joint searches across text, image, and structural data65. This limitation significantly hampers researchers’ ability to uncover potential correlations and discover new materials across modalities.

Interoperability66 remains another pressing issue. Many current platforms operate in isolation, adhering to unique data schemas and access protocols. This lack of standardization limits seamless data exchange and integration, which is essential for fostering global collaboration and comprehensive materials research. As the volume and complexity of materials data continue to expand, the limitations of current systems necessitate a paradigm shift toward more robust and scalable frameworks.

Blockchain-based MGE platforms5,23 offer a transformative solution to critical data management challenges by leveraging decentralized, secure, and transparent architectures. These platforms enhance resilience through distributed systems, ensure data integrity with immutable ledgers, and enable precise provenance tracking. Automated access control via smart contracts strengthens security, while cross-platform interoperability facilitates seamless integration across disparate repositories. Notably, blockchain-based metadata retrieval introduces innovative opportunities67. Decentralized metadata networks supported by blockchain enable efficient, trustworthy multi-modal data retrieval, reducing fragmentation caused by data silos. Blockchain’s transparency and immutability ensure reliable metadata processes, while smart contracts allow personalized retrieval rules tailored to user needs. This model accelerates knowledge discovery by uncovering deep relationships within heterogeneous data, driving innovation in materials science.

Data Integration

Data integration68,69 forms the backbone of Material Genome Engineering (MGE), enabling the aggregation, harmonization, and management of diverse datasets that fuel materials discovery and innovation. Materials data, inherently complex and heterogeneous, span various scales from atomic-level simulations to macroscopic mechanical property measurements70. These datasets often originate from diverse sources such as computational models, experimental laboratories, and industrial processes, each generating data with distinct formats, structures, and metadata definitions. Integrating such diverse datasets is essential for deriving meaningful scientific insights, supporting AI-driven analysis, and fostering collaborative research through unified data environments71.

Effective data integration involves several interdependent steps aimed at ensuring consistency, usability, scalability, and data quality. Typical data integration processes in big-data sharing platforms are shown in Fig. 2.

-

1.

The process begins with comprehensive data collection, consolidating relevant datasets from various sources while ensuring completeness and contextual accuracy. Crucially, adherence to established principles-specifically, the FAIR (Findable, Accessible, Interoperable, and Reusable) principles72 and the CARE (Collective Benefit, Authority to Control, Responsibility, and Ethics) principles73-should underpin the entire integration process, rather than treating them as metadata standards. These guiding principles provide high-level goals for responsible and equitable data management, while their operationalization relies on community-driven metadata standards, persistent identifier (PID) policies, and machine-actionable schemas. Together, they provide standardized and comprehensive metadata to support seamless data discovery, integration, and reuse.

-

2.

Following data collection, data cleaning and preprocessing74 address inconsistencies, missing values, and data errors, establishing a uniform data foundation. Data transformation and normalization further ensure compatibility by standardizing formats, units, and scales, enabling meaningful comparisons across datasets from different research environments. Metadata annotation75 then documents experimental conditions, computational parameters, and contextual details, enhancing interpretability and supporting advanced data analysis workflows. To ensure datasets meet strict usability and integrity criteria, robust data quality control measures must be consistently applied-these include systematic validation, cleaning protocols, rigorous quality assessments, and standardized data processing workflows.

-

3.

Finally, data storage and indexing organize datasets into searchable repositories, streamlining efficient retrieval67 and facilitating data-driven computations5. While blockchain technologies play a crucial role in securing data provenance and ensuring tamper-resistance, they complement rather than replace traditional data quality control mechanisms. Therefore, a comprehensive and multi-layered integration framework-combining FAIR and CARE principles, quality control protocols, and provenance assurance-is essential for building trustworthy, reusable, and scalable data infrastructure.

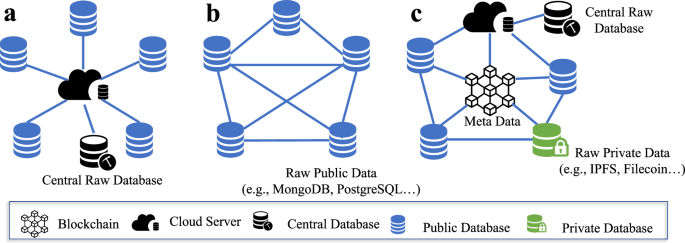

Data integration processes in big-data sharing platforms. (a) Traditional methods: Data providers with public data share raw datasets to a centralized cloud database managed by a supervisory entity. Private data providers, however, are excluded from sharing due to confidentiality concerns. Data consumers access raw data under centralized supervision, which poses risks of privacy breaches, and limited inclusivity. (b) Blockchain-based methods: Interactions among providers, consumers, and supervisors occur via smart contracts and a blockchain ledger, which securely manages metadata and transaction records. Actual raw datasets are stored separately using decentralized storage solutions like IPFS, providing efficient retrieval, and redundancy.

Despite these structured steps, materials data integration presents significant challenges due to the intrinsic complexity of materials science. Data heterogeneity71 is a persistent issue, arising from variations in measurement techniques, computational methods, and data representations across scientific disciplines. Semantic inconsistencies frequently occur when research teams adopt unique terminologies and data models, complicating interoperability76,77. Ensuring the traceability of data origins and processing histories becomes even more critical in collaborative, multi-institutional projects where provenance tracking supports reproducibility and trust.

Addressing these challenges requires the adoption of semantic frameworks and ontology-based models78. The Materials Ontology (MatOnt)79 provides standardized representations for crystallographic structures, mechanical properties, and thermodynamic behaviors, facilitating data harmonization and enabling automated analysis across diverse platforms. To address the storage of heterogeneous materials data, the dynamic container model (DCM)67,71 offers a flexible solution. DCM allows users to customize templates tailored to their specific data structures, normalizing original datasets and converting them into containerized formats. These containerized datasets are then resolved into appropriate data structures by database adapters and stored in suitable databases. Additionally, linked data frameworks enhance integration by using persistent identifiers to connect datasets, supporting machine-readable processing pipelines that enable large-scale data exploration and efficient cross-referencing.

Blockchain technology enhances data integration by providing a decentralized, transparent, and immutable data management framework that overcomes the limitations of traditional centralized repositories, such as access restrictions80. Blockchain-based systems enable distributed data repositories with tamper-proof transaction records, ensuring datasets are securely stored while maintaining an auditable history of contributions, modifications, and access events. This guarantees data integrity, supports long-term research reliability, and protects intellectual property. Complementing blockchain’s immutability and provenance capabilities, decentralized storage technologies like the InterPlanetary File System (IPFS)81 provide scalable, fault-tolerant storage solutions essential for managing extensive materials datasets. IPFS employs content-addressable storage mechanisms, offering efficient data retrieval, redundancy, and resilience against single points of failure, thus playing a critical role in robust data integration frameworks. By distributing data across a global network of nodes, IPFS reduces risks associated with hardware failures or cyberattacks through fragmentation and redundant storage82,83.

Data integration frameworks also benefit from automated data validation84 and curation pipelines that guarantee the quality, consistency, and completeness of materials datasets. Blockchain-based smart contracts can enforce data validation protocols by automatically verifying dataset attributes such as file format, metadata completeness, and adherence to predefined quality standards. Upon successful validation, the blockchain can issue access tokens or digital certificates using established token standards, such as ERC-721 (Non-Fungible Token standard) or ERC-1155 (Multi-Token standard), to grant secure, verifiable access to high-quality, validated datasets. The ERC-721 standard enables the creation of unique, non-interchangeable tokens, suitable for issuing unique digital certificates that represent ownership or exclusive access rights to specific datasets. Alternatively, the ERC-1155 standard supports both fungible and non-fungible tokens, enabling more flexible and efficient management when handling multiple access levels or a large number of dataset tokens simultaneously.

Selecting a specific token standard impacts not only the technical complexity but also cost implications (e.g., gas fees) on public blockchain networks such as Ethereum. ERC-1155, for example, offers cost efficiencies by allowing batch transfers and operations, significantly reducing transaction fees compared to ERC-721, particularly when managing numerous tokens. Therefore, the choice of token standard and blockchain implementation strategy should carefully balance technical functionality, ease of use, and economic considerations, such as gas fees, to ensure efficient and sustainable management of access controls and digital certificates within blockchain-enabled data-sharing ecosystems.

Trading and Circulation

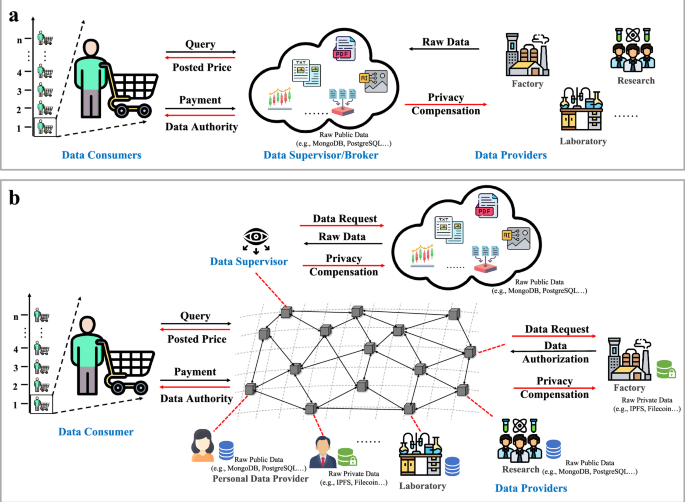

In the age of big-data, information has become a crucial asset for businesses, governments, and organizations85. Data holders often recognize the commercial potential of their data and seek to monetize it by offering access for a fee. Simultaneously, data consumers are willing to pay for valuable datasets, creating a dynamic ecosystem for digital commodities. Typical architectures of trading and circulation prosess are diuscussed in Fig. 3. Thereinto, centralized data marketplaces operated by government agencies or private enterprises have traditionally facilitated this exchange, functioning as intermediaries for data sharing and payment processing86. However, these centralized systems often encounter limitations such as restricted access, vulnerabilities in transparency and trust.

Trading and circulation in big-data sharing systems. (a) Traditional Data Trading Model: In a general system model for online personal data markets, there are three primary entities: data owners, a central data supervisor or broker, and data consumers. Data owners provide their raw data to the central server managed by the data supervisor, who acts as an intermediary. The data supervisor collects, organizes, and manages this data, making it available to consumers through an online marketplace. Data consumers access and purchase data by interacting with the central server, which handles transactions and enforces data-sharing agreements. However, this centralized approach requires data owners to relinquish control of their data, posing risks such as privacy breaches, data misuse, and single points of failure. (b) Blockchain-Based Data Trading Model: In blockchain-based data trading systems, data owners retain control over their data, which can remain stored locally or in secure, decentralized storage solutions. All consumer queries, transactions, and payments are facilitated through the blockchain, eliminating the need for a central broker. Smart contracts automate the trading process, ensuring that data-sharing agreements and payments are securely executed and recorded on the blockchain. Consumers access metadata describing the available datasets, and only after meeting pre-defined terms, such as payment or licensing agreements, are they granted access to the data. This decentralized model enhances privacy, transparency, and trust while reducing the risks associated with centralized data markets.

In Material Genome Engineering (MGE), the trading and circulation of materials data are pivotal for advancing research and innovation87,88. Materials data-spanning computational models, experimental results, and material properties-carry substantial intellectual and commercial value. Secure and transparent mechanisms for sharing and monetizing these datasets are essential to ensure equitable access, accurate valuation, and robust protection of intellectual property (IP). Blockchain technology provides a transformative solution by enabling decentralized, secure, and transparent data-trading infrastructures89. Its tamper-proof ledger immutably records all transactions, fostering accountability and trust among stakeholders. This transparency mitigates risks of data misuse, supports robust governance, and ensures that every data exchange is traceable and verifiable. For instance, blockchain can document the entire lifecycle of materials datasets-from generation to application-preserving data integrity, ensuring provenance, and safeguarding IP rights while promoting a sustainable and equitable data-trading ecosystem4.

Smart contracts, a key innovation within blockchain, automate transactional processes such as data-sharing agreements, IP licensing, and revenue distribution. These self-executing digital agreements enforce predefined terms, removing the need for intermediaries. For example, a research institution could implement a smart contract to grant dataset access only after payment or compliance with specific usage terms, with blockchain securely logging all interactions90. This automation streamlines data circulation, reduces administrative overhead, and ensures equitable enforcement of agreements.

Tokenization91,92,93 revolutionizes data trading by transforming datasets into digital assets with clearly defined ownership and usage rights. Through tokenization, datasets can be converted into unique digital tokens that specify access permissions, usage limits, or intellectual property rights. These tokens can be traded, leased, or licensed on decentralized marketplaces, fostering a dynamic data economy and incentivizing collaboration. For instance, a computational research group could tokenize its dataset on high-temperature superconductors94, allowing industrial partners to purchase time-limited or usage-specific access tokens. This approach not only simplifies data monetization but also encourages broader participation in data sharing while maintaining control over dataset usage, thereby unlocking new opportunities for innovation and collaboration in MGE.

Tokenization within blockchain-based Material Genome Engineering (MGE) offers a transformative framework for data sharing and collaboration. By utilizing blockchain’s decentralized architecture, institutions can tokenize proprietary datasets and establish access terms through smart contracts, such as sharing quotas or time-limited permissions. This approach ensures an immutable record of interactions, reducing disputes, promoting equitable distribution of research credits, and enhancing revenue-sharing mechanisms. Such transparency fosters trust and sustainable partnerships among stakeholders, creating a more collaborative research environment. Market-driven incentives, such as token-based reward systems, further amplify the potential of blockchain in MGE by encouraging the contribution of high-quality datasets. For example, contributors to a shared blockchain repository can earn tokens proportional to the relevance and frequency of their datasets’ usage, cultivating a dynamic and equitable ecosystem that advances collaborative materials research.

Data-Driven Computation

Data-driven collaborative computation95,96,97,98 forms the foundation of Material Genome Engineering (MGE), powering large-scale simulations, AI-driven predictions, and cross-institutional research. The inherent complexity and heterogeneity of materials data10, spanning atomistic simulations, experimental measurements, and theoretical models, demand robust frameworks that enable seamless integration, secure collaboration, and scalable processing. To address these challenges, blockchain-based architectures offer a transformative solution, eliminating bottlenecks associated with centralized control and single points of failure. By distributing storage and computation across nodes, blockchain ensures resilience, data availability, and consistency, even during network disruptions or cyberattacks. For instance, blockchain-coordinated distributed simulations analyzing perovskite formation energies ensure the integrity of aggregated results while maintaining the confidentiality of institutional datasets5.

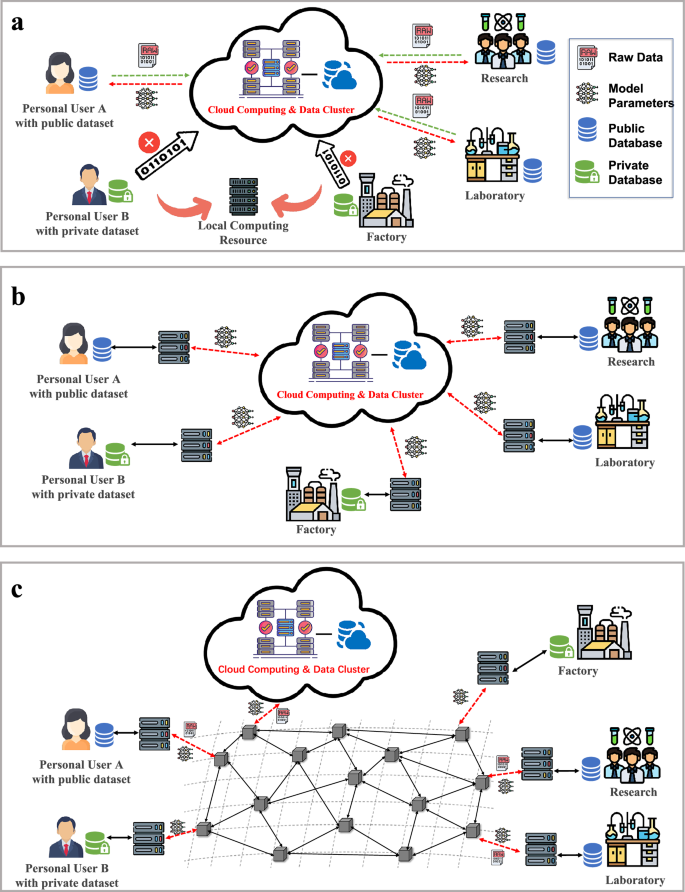

Traditional collaborative computational frameworks, as indicated in Fig. 4, rely on centralized cloud environments where public data can be freely shared and processed using the extensive computational resources of cloud services. While this approach enhances efficiency and supports data-sharing among users of public datasets, it excludes participants with private or sensitive data, as confidentiality concerns prevent them from contributing raw data to the cloud. This limitation creates a dichotomy between public and private data users, restricting comprehensive collaboration and reducing system utility.

Data-driven computation in big-data sharing frameworks evolves from traditional centralized approaches to advanced decentralized methodologies. (a) Traditional collaborative computational frameworks rely on centralized cloud environments, where public data is shared and processed using the substantial computational power of cloud services. This setup enhances learning efficiency and data-sharing among public dataset users but excludes those with private or sensitive data due to confidentiality concerns, restricting them to local computations and creating a divide that limits comprehensive collaboration. (b) Federated Learning (FL) addresses this limitation by enabling decentralized model training, allowing participants to retain local data and share only encrypted model updates with a central aggregator to construct a global model. (c) Swarm Learning, an evolution of FL, integrates blockchain technology to eliminate centralized aggregators, decentralizing computations and model coordination through blockchain-enabled consensus mechanisms. Participants retain full control over their data while sharing encrypted updates via a secure blockchain network.

Federated Learning (FL)47,99,100 introduces a decentralized approach that allows institutions to train a shared global model without sharing raw data. Each participant retains proprietary datasets locally, performing computations on their own infrastructure and sharing only encrypted model updates with a central aggregator. While FL preserves data confidentiality and enables collaborative learning, its reliance on centralized aggregation poses risks such as single points of failure and limited transparency in coordination processes. Blockchain augments FL by recording encrypted updates immutably, enhancing accountability and reproducibility100.

Swarm Learning, an advanced iteration of blockchain-based FL34, eliminates centralized aggregation entirely, leveraging blockchain-enabled consensus mechanisms to coordinate model updates in a decentralized manner. This approach allows participants to maintain full control over their data while sharing encrypted updates through a secure blockchain network. Smart contracts automate processes such as model synchronization, validation, and contribution tracking, fostering transparency and trust. Already applied in healthcare to predict disease outcomes and improve diagnostics34, swarm learning has demonstrated its potential to leverage distributed data effectively while preserving privacy. In MGE, MatSwarm5 applies swarm learning principles, enabling institutions to collaborate securely without exposing proprietary datasets. This decentralized framework enhances data privacy, and fosters robust predictive modeling for material properties, paving the way for breakthroughs in materials discovery and AI-driven innovation.

Secure Multi-Party Computation (MPC)101 further complements blockchain-based data-driven computation by enabling collaborative analysis of encrypted datasets while maintaining strict confidentiality. Blockchain serves as a trustless coordinator, managing encrypted data exchanges and ensuring result verification through tamper-proof audit trails. For example, institutions conducting joint research on high-temperature superconductors can securely analyze thermal and mechanical properties using MPC, preserving data privacy while ensuring the integrity and fairness of the collaboration102.

Blockchain’s immutability103 strengthens these computational frameworks by ensuring transparent and traceable data usage histories. Every transaction, from dataset access to model updates and computation execution, is recorded immutably, fostering reproducibility and accountability. Smart contracts simplify administrative workflows by automating data-sharing agreements, licensing conditions, and attribution requirements. Researchers can submit datasets, trigger tasks, and access results seamlessly, as smart contracts validate inputs and coordinate activities across decentralized platforms. These blockchain-enabled workflows enhance computational efficiency, ensure consistency, and accelerate multi-step research processes, driving real-time collaboration and improving the reproducibility of scientific outcomes. Moreover, blockchain-stored metadata maintains standardized references across diverse systems, enabling predictive models and analytical tools to utilize comprehensive and validated datasets effectively.

Governance

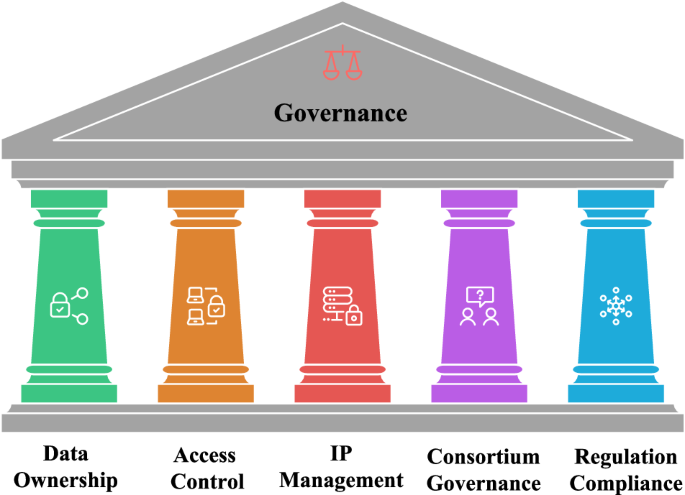

Governance36,104 in Material Genome Engineering (MGE) is foundational for secure, transparent, and equitable data management in multi-institutional research environments. It encompasses the roles, responsibilities, and decision-making protocols essential for compliance, trust-building, and safeguarding the rights of contributors, as shown in Fig. 5. Effective governance frameworks ensure collaborative research thrives by balancing complex data-sharing arrangements and organizational decisions across diverse stakeholders. Traditional governance often relies on centralized decision-making structures, which may conflict with the decentralized ethos of blockchain systems. An emerging alternative is decentralized autonomous organizations (DAOs), which leverage blockchain technology and smart contracts to facilitate transparent, collective governance without centralized authorities.

Governance in big-data sharing framework. Governance plays a pivotal role in big-data sharing frameworks, ensuring secure, transparent, and equitable management of data across diverse stakeholders. Key aspects of governance include data ownership, which defines rights and responsibilities over datasets; access control, which establishes who can access data, under what conditions, and for what purposes; and intellectual property (IP) management, which governs the registration, licensing, and fair distribution of benefits arising from data usage. Effective governance also extends to consortium management, facilitating collective decision-making and dispute resolution in multi-institutional collaborations. Additionally, ensuring compliance with regulatory standards is crucial for aligning data-sharing practices with legal and ethical requirements, fostering trust and reliability in the framework.

DAOs operate via smart contracts that encode rules for membership, voting procedures, and decision-making protocols directly onto a blockchain. Decisions in a DAO require consensus among members, providing transparency and minimizing disputes over organizational management, data-sharing policies, or intellectual property distribution. Such decentralized governance mechanisms are increasingly being piloted across research communities and data-centric collaborations, including scientific data-sharing initiatives, to promote equitable participation, transparent decision-making, and scalable coordination across distributed teams, e.g., Genomes.io ( GenomesDAO ( VitaDAO ( LabsDAO ( etc.

Examples of DAO-governed data and workflows

GenomesDAO/Genomes.io demonstrates community-governed policies for accessing sensitive genomic resources via a participant-controlled “DNA Vault,” with governed consent and query rules recorded on-chain. VitaDAO coordinates funding and outputs in longevity science through token-governed proposals and transparent review processes, providing an operational template for community-governed research artifacts and associated metadata policies. LabDAO organizes distributed labs and tooling via DAO primitives and decentralized storage (e.g., IPFS), enabling shared workflows and machine-actionable records of inputs/results. In parallel, Ocean Protocol’s Compute-to-Data and DataDAO patterns illustrate how DAO governance can manage datasets and enable privacy-preserving analytics without exposing raw data, aligning incentives among data stewards and model developers105. While promising, these experiments also surface open challenges in long-term sustainability, contributor incentives, regulatory alignment, and ethics.

Despite their potential, DAOs also face practical challenges, including complexity in managing consensus across diverse stakeholders, potential for governance attacks, and limitations in scalability. For example, recent work highlights how DAOs can effectively manage large-scale scientific collaborations by automating decision-making processes and resource allocation, yet also emphasizes challenges related to stakeholder engagement, voting efficiency, and legal compliance106,107. Addressing these challenges requires careful consideration of organizational protocols and consensus mechanisms to ensure DAOs can sustainably support data governance in material genome engineering networks.

In summary, integrating DAO-based governance frameworks into blockchain-enabled data-sharing ecosystems offers considerable advantages for transparency and equity but requires careful design and clear protocols to manage inherent complexities.

At the core of governance lies data ownership73, which determines who controls and benefits from the datasets generated through computational models, experimental measurements, and industrial collaborations. Ownership frameworks must clearly delineate rights and responsibilities to protect contributions and establish terms for data usage and commercialization. For instance, in a consortium developing next-generation battery materials, ownership agreements ensure that each institution’s contributions are protected and appropriately recognized, fostering fair collaboration and innovation.

Access control108 is another critical pillar, dictating who can access data, under what conditions, and for what duration. These policies must balance openness with confidentiality. Public datasets, such as experimental data on common metals, can foster widespread innovation, while high-value proprietary datasets, such as advanced alloys with significant commercial potential, require restricted access governed by intellectual property (IP) agreements. Well-defined access policies prevent misuse and ensure compliance with legal and ethical standards.

IP management109,110 is integral to governance due to the commercial and academic value embedded in materials data. Governance frameworks must establish clear rules for IP registration, licensing, and revenue sharing, particularly in industry-academic collaborations. For example, joint patents and royalty distribution terms can be pre-defined, ensuring all stakeholders receive fair recognition and compensation. These mechanisms promote trust and encourage participation in large-scale collaborative efforts.

Consortium-based governance models111 support complex, multi-stakeholder collaborations by creating collective decision-making processes. Participating institutions often appoint representatives to a regulatory body or steering committee responsible for ensuring compliance, resolving disputes, and sustaining project operations. Examples such as the Materials Project12 and NOMAD Laboratory16 showcase how shared governance principles enable successful international collaborations. These models can be tailored to ensure efficient decision-making while accommodating diverse stakeholder interests.

Legal and ethical compliance is a cornerstone of governance, particularly for international collaborations. Governance frameworks must align with global regulations such as the General Data Protection Regulation (GDPR)112 and other industry-specific standards. Harmonizing policies across jurisdictions not only prevents disputes but also facilitates seamless project execution while upholding ethical research practices. Effective dispute resolution mechanisms are crucial for managing conflicts over data ownership, agreement breaches, or credit allocation. Blockchain-enabled arbitration systems provide transparent, impartial, and tamper-proof resolutions, preserving trust among collaborators. For instance, disputes regarding the usage of shared datasets can be efficiently resolved through blockchain-based systems, ensuring fair outcomes and maintaining the integrity of collaborative research efforts.

Blockchain technology fundamentally enhances governance in Material Genome Engineering by introducing a transparent and tamper-proof system for recording and automating governance actions. Smart contracts enforce data-sharing agreements by immutably logging actions, ensuring compliance and traceability. For example, when researchers access datasets, blockchain systems securely record these events and verify adherence to predefined rules, reducing administrative overhead and promoting accountability. Attribution protocols and data usage permissions are equally critical for equitable recognition of contributions. Governance frameworks must define how credit is allocated across publications, patents, and industrial applications. For instance, in collaborative efforts to develop machine-learning models predicting material properties, blockchain-based smart contracts can automate attribution rules and royalty distribution, embedding these processes directly into the data-sharing pipeline to ensure fairness and transparency5.

Despite early successes, DAO-governed data networks remain nascent in science. Key open issues include governance capture risks, token-voting biases, resource sustainability, and compliance/ethics for sensitive data; addressing these requires continued empirical evaluation and policy-aware design.

Security and Privacy

Blockchain technology significantly enhances security and privacy through its capabilities in data encryption, trust management, and smart contract automation111,113,114. At the core of these innovations is blockchain’s tamper-proof system, which securely records and automates actions related to data access and sharing. By leveraging immutable ledgers, blockchain ensures that all events are transparent and traceable. For instance, when a researcher accesses a dataset, the system logs the event immutably and verifies adherence to predefined rules, reducing administrative overhead and ensuring accountability. This transparency is foundational for building trust among collaborators, particularly in multi-institutional research environments.

Data encryption111,115 lies at the core of blockchain’s privacy-preserving capabilities, enabling both secure storage and transmission of sensitive datasets. Advanced cryptographic techniques such as asymmetric encryption116, hash functions117, and zero-knowledge proofs60 ensure that data remains confidential and accessible only to authorized users, while also maintaining its integrity throughout the transaction lifecycle. In Material Genome Engineering, where datasets often include proprietary or commercially sensitive information, encryption protocols like AES-256 or elliptic curve cryptography118 safeguard transaction records and datasets against unauthorized access. Additionally, homomorphic encryption62 can be implemented to allow computations on encrypted data, enabling collaborative research without exposing sensitive information. These encryption methods establish a robust foundation for secure collaboration in decentralized environments.

Trust management is inherently enhanced by blockchain’s decentralized architecture, which eliminates reliance on intermediaries and central authorities. Blockchain consensus mechanisms vary significantly between public and private or consortium blockchains. Public blockchains typically use consensus protocols such as Proof of Work (PoW) or Proof of Stake (PoS), which provide high levels of decentralization but often struggle with throughput limitations and high computational or energy overheads. Conversely, private or consortium blockchains commonly implement consensus mechanisms such as Practical Byzantine Fault Tolerance (PBFT), Raft, or Proof of Authority (PoA). These consensus algorithms generally offer improved scalability, higher transaction throughput, and lower latency, making them more practical and efficient for enterprise or research-oriented applications in Material Genome Engineering (MGE).

In cross-border collaborations, where institutional policies and legal frameworks often vary, blockchain’s decentralized trust model ensures that participants adhere to mutually agreed-upon rules without requiring pre-existing trust relationships. For example, distributed trust can be reinforced using verifiable credentials stored on the blockchain, allowing participants to validate identities and permissions without relying on a central authority. By combining transparency with cryptographic verification, blockchain minimizes risks and fosters seamless global collaboration.

Smart contracts amplify blockchain’s privacy-preserving capabilities by automating data-sharing agreements and eliminating the need for third-party intermediaries119,120. These self-executing contracts enforce predefined conditions, ensuring that sensitive datasets are shared only under authorized terms. For instance, a consortium developing advanced alloys can employ smart contracts to manage access permissions, enforce embargo periods, and automate royalty distributions based on usage metrics. This approach streamlines processes, reduces administrative burdens, and safeguards sensitive information by ensuring compliance with privacy and licensing agreements. Fine-grained access controls121,122 further support privacy preservation by allowing data owners to specify detailed permissions, such as restricting access to specific dataset fields or imposing time-limited conditions. When combined with encryption and smart contracts, these controls ensure that data is accessible only to those meeting specified criteria, protecting both individual privacy and organizational interests.

Blockchain’s dispute resolution mechanisms123,124 enhance trust and compliance by providing transparent and tamper-proof solutions for conflicts over data ownership, usage breaches, or attribution disputes. Blockchain-enabled arbitration systems offer impartial adjudication supported by verifiable records, ensuring fair and efficient resolutions. For example, disagreements regarding the usage of shared datasets can be swiftly addressed through blockchain’s immutable ledger, preserving trust among collaborators and maintaining the integrity of agreements38,123. By combining these capabilities with data encryption, decentralized trust mechanisms, and privacy-preserving smart contracts, blockchain establishes a comprehensive framework for secure and transparent data-sharing. This robust framework addresses the complexities of multi-stakeholder collaborations while enabling ethical and efficient research practices, positioning blockchain as a transformative tool for the future of data-driven innovation.

link